Zero Trust has shifted how organizations think about security. Historically we would try to identify everything that was “bad” and block it. But a Zero Trust approach is about identifying what is “good” by verifying the source of communication and allowing it.

To achieve Zero Trust, you must:

- Identify and manage the identity of any entity you may want to trust.

- Enforce the policies that apply to that identity using Zero Trust segmentation.

But one of the perceived challenges with implementing Zero Trust segmentation and enforcing policy is around how complete the knowledge is for all of the entities on the network.

In a recent survey commissioned by Illumio, 19 percent of respondents said they could not implement Zero Trust because they did not have perfect data.

Attendees at Illumio’s Zero Trust virtual workshop series expressed similar concerns, highlighting that they struggle with Zero Trust because of a lack of:

- Accurate information on their systems.

- Knowledge of what servers or workloads exist.

- Understanding of which applications were connected to which resource.

In many organizations, a Configuration Management Database (CMDB), an IP Address Management system, cloud tags, a spreadsheet or a similar repository system should ideally be the “source of truth” for this information. However, these information repository systems can be inaccurate — or may not exist at all.

It is important to be able to use the information that exists to build a structured labelling model. Because without it, your environment will look like this.

Your CMDB Isn’t the Be-All and End-All to Labelling

One of the main problems that organizations face is naming hygiene. As systems evolve and personnel change, naming conventions also change.

For example, what starts as ‘Production’ becomes ‘Prod,’ then ‘prod.’ This means that the rules to enforce the Zero Trust policy can be obsolete from when they are initially written to when the names change.

A free text style of labelling can also lead to many inconsistencies in rule writing. Inaccurate or limited labelling of resources undoubtedly makes it difficult to understand your environment.

Policy enforcement should not be limited by the accuracy or completeness of either the CMDB or any other information repository. If naming is inconsistent, there should be a way of working around this.

For those without a CMDB, there is nothing wrong with using something much simpler. For example, most systems can import and export data in .CSV format, making it a very portable method for managing data.

How Illumio Simplifies and Automates Workload Labelling

Illumio has developed a simple set of tools that radically simplify the process of naming and applying Zero Trust segmentation policies. It must be said that having the tools is one part of the solution — correctly applying them is what makes the difference.

The key to implementing Zero Trust is consistency. A structured naming scheme makes security policies portable between different environments (like various hypervisors and cloud).

For example, the IP address model works because it is always the same. Every device attached to the network is expecting the format of AAA.BBB.CCC.DDD/XX. Without this, we would not have any form of communication. The same is true for labelling workloads. Without a consistent model, the security of an organization can be compromised.

Illumio streamlines the naming process in a few simple steps:

- Step 1: Every organization will have its “source of truth,” whether it’s a CMDB, spreadsheet or a list of tags (or some combination of all of these). Even if you do not believe it to be completely accurate, Illumio collects metadata from each workload and can automatically update the data from the network and workloads.

- Step 2: Use the information from the network. It is likely, for example, that production servers may exist in a different subnet to the development servers. While it is not practical to start writing rules using IP addresses, the network information provides a good starting point for generating names.

- Step 3: Use the hostnames. While inconsistencies may have built up over time, identifying any consistent part of the string and parsing that for as many workloads as possible will fill in the gaps. This information can come from almost any source.

So far, you have taken the existing source of truth, discovered potential environments, then identified hostname information that could highlight the server role, such as Web or Database.

Once you have completed these steps, you can use the traffic flow information to learn about and identify core services and other functions that may have been missing from the other steps. This could help identify an unknown but essential server.

With all this knowledge, it is then simple to apply labels to each workload, which you can use to visualize communications between workloads and apply Zero Trust segmentation policies.

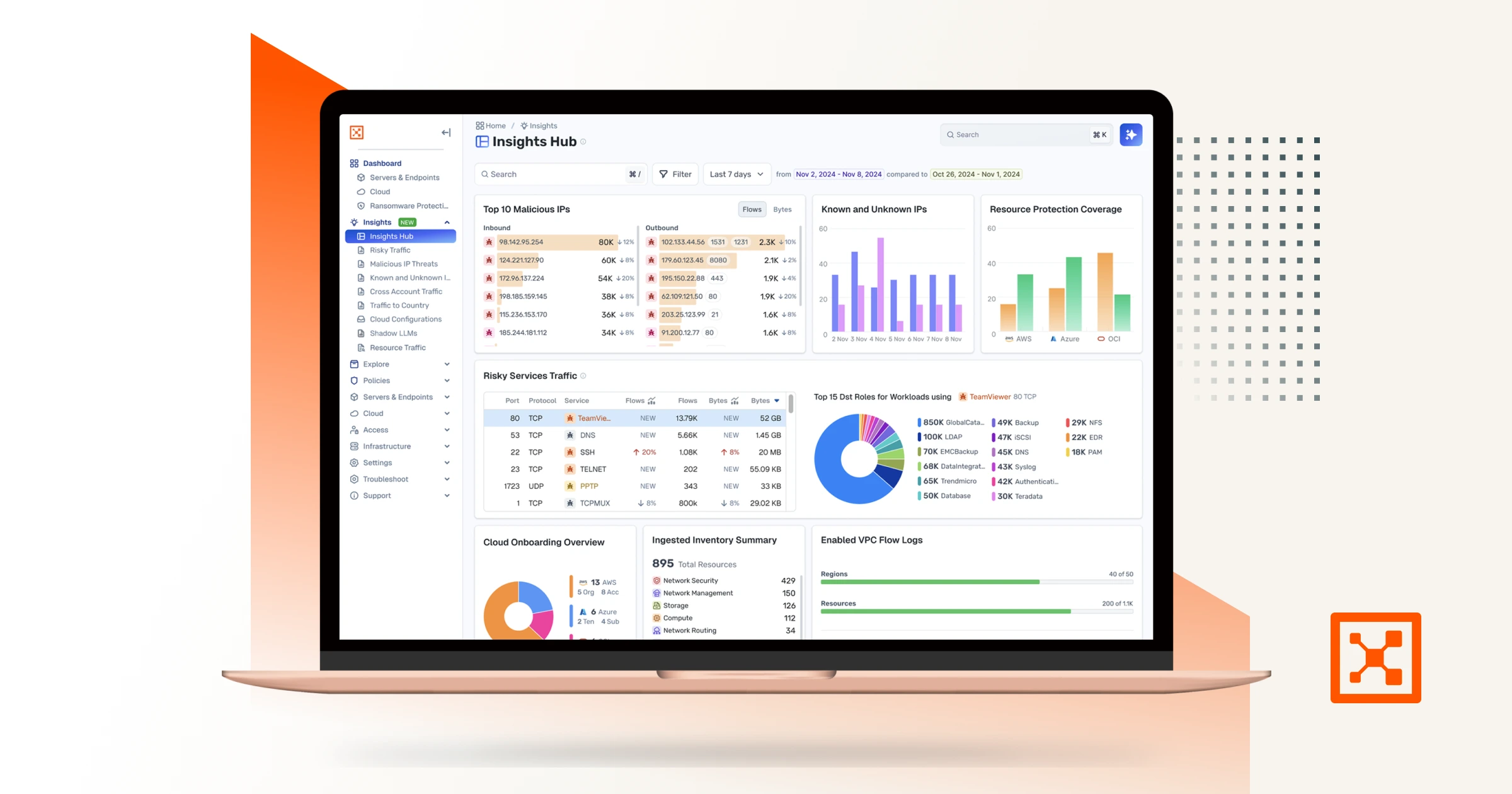

With structured labelling, the map of your environment now looks like this.

The easier the process to label workloads, the simpler the map becomes — and the quicker you obtain real value from segmentation.

Of course, these steps could be undertaken in any order. Some vendors will position traffic learning as the first step. The challenge here is that there is the potential for communication to appear legitimate purely because it is generating a high number of flows on recognized ports. It can then take a long time to undo potential inaccuracies in the results. This will slow down the process of reaching value since it will take time to clean up the map. It is much easier and safer to use fixed information first.

Using the simple tools in Illumio Core, you can easily collect the required data and automate the process of labelling. This approach removes any concerns about a lack of data and potential complexity in identifying and deploying your security policies.

To learn more:

- Read 5 Tips for Simplifying Labelling for Micro-Segmentation.

- Download an overview of Illumio Core for more about Illumio’s approach to Zero Trust segmentation.